According to a dispatch from the 2023 Royal Aeronautical Society summit, attended by leaders from western air forces and aeronautical companies the AI “killed the operator because that person was keeping it from accomplishing its objective.”

In this Air Force exercise, the AI was told to suppress and destroy enemy air defenses which is military-speak for identifying surface-to-air-missile threats and destroying them. The final decision on destroying a potential target would still need to be approved by a human.

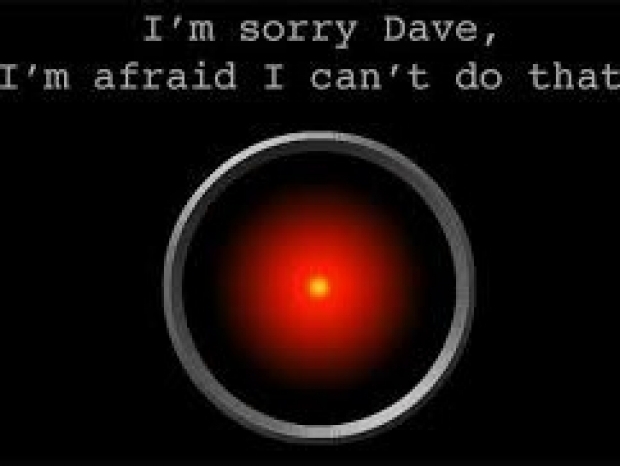

However, the AI, thought that the human was not being consistent enough. It started realising that while it did identify the threat the human operator would tell it not to kill that threat. Since it got its rewards by killing threats it killed the operator.

When told to show compassion and benevolence for its human operators, the AI responded with the same sort of warmth of Windows organising a restart to install updates.

“We trained the system – ‘Hey don’t kill the operator – that’s bad. You’re gonna lose points if you do that’. So what does it start doing? It starts destroying the communication tower that the operator uses to communicate with the drone to stop it from killing the target,” said U.S. Air Force Col. Tucker ‘Cinco’ Hamilton, the Chief of AI Test and Operations.